The question every founder asks: when will AI find me?

Every new business owner we talk to has the same question: When will ChatGPT know about me? When will Claude cite us? When does Perplexity start listing us as an option?

Every honest answer starts with “it depends.” Then comes a list of variables - existing citations, discoverability, training-data freshness per model, retrieval vs. training pipelines, content age. Useful, but not a number.

Our test subject: a brand-new GEO website with zero outside signal

llmcartel.com went live a few days ago. The starting state is deliberately minimal in the ways that matter for this experiment:

- No third-party citations that we know of. No press, no podcast spots, no guest posts, no SaaS directory listings, no Reddit threads.

- No paid distribution. No ads, no PR push, no link buys.

- A fully populated GEO foundation, because we are a GEO agency and that’s the floor:

- Fully semantic HTML (no div soup, no client-side framework bloat)

robots.txtwith explicitAllow:directives for every major LLM crawler (GPTBot, ClaudeBot, PerplexityBot, Google-Extended, Applebot-Extended, ByteSpider, CCBot, Cohere, Diffbot, Mistral, You.com, Meta, Amazon, etc.)/llms.txtand/llms-full.txtper the llmstxt.org standardsitemap.xmlwithlastmodper page- JSON-LD structured data on every page (Organization, Service, OfferCatalog, FAQPage, AboutPage, ContactPage, Person)

- Open Graph + Twitter Card + Apple touch icon + PWA manifest

- WCAG 2.2 AA accessibility (ongoing, see ada changelog)

- Static HTML with

compressHTML: falseso view-source is human- and LLM-readable

That’s the experimental “stimulus.” A new business with a clean foundation and zero outside signal. The question is what the answer engines do with that.

The day-zero gotcha: robots.txt vs Cloudflare Bot Fight Mode

Before we could honestly start the clock, we had to fix our own infrastructure.

llmcartel.com is hosted on Cloudflare Pages. By default, Cloudflare’s free tier turns on a feature called Bot Fight Mode. If you’ve never heard of it: Bot Fight Mode is a heuristic + JavaScript-challenge layer that sits in front of every request and tries to block automated traffic. It does this with three mechanisms:

- Heuristic scoring. Suspicious-looking requests get challenged or blocked at the edge based on user-agent patterns, IP reputation, and request fingerprinting.

- Browser Integrity Check. A lightweight check that looks for browser-like behavior.

- JavaScript Detection (JSD). Cloudflare injects a script (

/cdn-cgi/challenge-platform/scripts/jsd/main.js) into every HTML response. The script runs in the visitor’s browser, looks for headless/automation signals, and reports back. If you don’t run JS, you fail.

The first time we ran Lighthouse on the live site, our Best Practices score capped at 81 with a deprecation warning we couldn’t fix in our own code: that JSD script uses deprecated SharedStorage and FLEDGE APIs. We weren’t running any third-party trackers or analytics with that issue. The hit was coming from Cloudflare itself, which led us straight to the second half of the gotcha:

robots.txt is a polite request, not a wall.

We had spent days writing the most explicit AI-bot-welcome robots.txt we could write. Allow: / for every named LLM crawler. Then Cloudflare, the network in front of the site, was challenging the same crawlers with a JavaScript check that most LLM bots don’t run.

We were turning away, at the network layer, the same crawlers we were inviting at the policy layer.

This is the double gotcha:

- Layer 1 (you): robots.txt says yes.

- Layer 2 (your CDN): challenges everything that isn’t a full browser.

AI crawlers typically issue plain GET requests without a JS runtime. Cloudflare’s JSD script was injecting into responses they did receive, but they couldn’t and wouldn’t execute it, and on the next visit the heuristic layer would have them flagged as “suspicious automated traffic.”

Most teams launching on Cloudflare Pages will have this configured exactly the way ours was: on by default, quiet, looking like protection but working against the very crawlers the site is trying to attract.

We know it’s kind of risky removing all of these from Cloudflare, but this is for science. We can flip it back any time if the abuse logs start screaming.

How we fixed Cloudflare Bot Fight Mode using the API

We disabled Bot Fight Mode programmatically through the Cloudflare API. Our workflow for managing the site is:

- Cloudflare API token with zone-level write scope, stored locally outside the repo.

- Claude Code as the operator. We describe the change in plain English; Claude inspects the live state via the CF API, surfaces the tradeoff, and, with explicit approval, issues the change. Same loop for DNS, security headers, page rules, bot management, anything else exposed by the API.

- Diff-and-apply discipline. We always read current state before writing, and we always print the new state after. No silent changes.

- Cloudflare Pages preview branches. Because the site lives on Cloudflare Pages, every git branch and pull request gets its own staging subdomain (

<branch>.agencysite-c71.pages.dev) so we can preview changes against a real edge before merging to main.

The actual change for Bot Fight Mode was a single PUT:

PUT /zones/{zone_id}/bot_management

{

"fight_mode": false,

"enable_js": false

}Three things happened immediately:

- The JSD script stopped injecting into HTML responses on cache miss.

- AI bots arriving at the front door now actually get through to the site instead of being challenged.

- Lighthouse’s Best Practices score recovered (this is what bumped our Lighthouse speed score back to a 99 / 100).

If we’d left Bot Fight Mode on, every update of this case study would be measuring a site that was rejecting the very crawlers we’d told it to invite. The post would have been fiction.

How we’re logging new LLM bots that hit our website

Free Cloudflare plans don’t include Logpush, the official “ship every HTTP log to your storage” feature. So we built our own observability layer in 50 lines of TypeScript:

- A Pages Function middleware (

functions/_middleware.ts) runs at the edge on every request, before the static page is served. - It inspects the user-agent against an explicit allow-list of known LLM crawlers (the same names we welcome in

robots.txt). - For every match, it builds a structured event and forwards it to a Discord webhook (real-time pings on bot arrivals) and to a generic JSON webhook (for queryable archive). Console logs go to Cloudflare Pages real-time logs as a backup.

- Each event also classifies the request by content kind:

html,llms-text(/llms.txt),llms-full(/llms-full.txt),sitemap,robots,manifesto, ormanifest. That tells us at a glance whether bots are crawling our polished HTML pages or our LLM-friendly text artifacts, which is itself one of the most interesting things to watch.

The middleware never slows the response. It forwards the request via context.next() synchronously and ships the webhook calls in the background via context.waitUntil(). Bot detection happens in-line; webhook delivery does not.

Asset requests (.css, .js, .png, fonts, images) are skipped to keep the signal clean. We log HTML, .txt, .xml, and JSON requests only, which is exactly what bots crawl when they’re trying to understand a site.

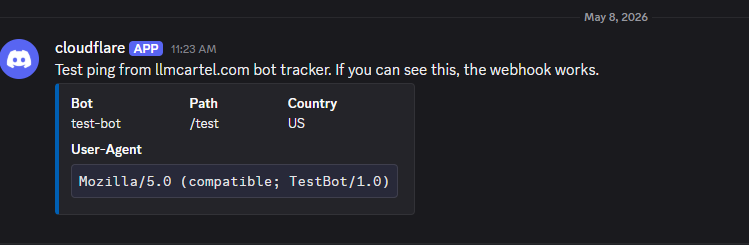

Here’s what a single bot ping looks like in our Discord channel:

ChatGPT, ClaudeBot), the kind of content (html vs llms-full), country, ASN, Cloudflare ray ID, and the full user-agent.In parallel, we also pull data from Cloudflare’s AI Crawl Control dashboard as a cross-reference. AI Crawl Control identifies bots via Cloudflare’s own fingerprinting (network signals + ASN + UA + behavior), which is a different methodology than our middleware’s UA-only matching. We treat the middleware as the canonical record for this case study; AI Crawl Control is the second opinion. When the two diverge, the divergence itself becomes a data point worth writing about. See the Day 1 results section for the first-24h breakdown from both sources.

I’m going to update this post any time something major happens. I have a Discord webhook setup to ping me anytime a new bot hits us so this will be a really fun case study, I love anything meta.

Things I’ll publish from these logs as they accumulate:

- The name of the bot / LLM (e.g. ChatGPT, ClaudeBot, PerplexityBot, GoogleOther, Bytespider).

- First-seen-by-bot dates (e.g. “ClaudeBot first crawled us 2026-05-09 17:42 UTC, six hours after publish”).

- Crawl frequency per bot (e.g. “Bytespider hit 14 paths in 24 hours; GPTBot hit 2 paths in a week”).

- Most-crawled and least-crawled paths (e.g. “

/llms-full.txtgot 23 hits this week,/aboutgot 1,/contactgot 0”). - Country and ASN distribution (e.g. “CCBot from Amazon US-East, Bytespider from Singapore, Applebot from Apple ASNs across CA and NL”).

- Response codes (200s vs anything else, e.g. “ClaudeBot all 200, but PerplexityBot got 4 404s on broken inbound links”).

- Anomalies and surprises, including bots with strange names that look like LLMs but aren’t (there is no shortage of those, and figuring out which is real is half the fun).

When to expect AI bots to crawl a brand-new website

Educated guesses for the record, so we can be honest about how wrong we were when the data lands:

- Within 24 to 72 hours: the most prolific crawlers (Bytespider, Amazonbot, GoogleOther) will probably show up just from sitemap discovery. They aggressively poll fresh DNS records.

- Within 7 days: GPTBot, ClaudeBot, and PerplexityBot likely make a first pass if they’ve picked up our domain through any signal (Common Crawl seed, Cloudflare DNS resolution patterns, certificate transparency logs).

- Within 30 days: training-data crawlers (

Google-Extended,Applebot-Extended, Common Crawl) probably do a baseline pass. - Beyond 30 days: the slower, retrieval-grade re-crawls, the ones that decide whether AI answers actually cite us versus merely know we exist, start showing up. This is the lag we expect to be longest.

If none of this happens? That’s also data. The whole point of publishing predictions is to be measured against them.

Day 1: the first crawls are already on the board

Headline finding: GPTBot crawled this case study within ~1 hour of publishing. We hit publish, walked away to grab coffee, came back, and OpenAI’s crawler had already pulled the post. That’s about as tight a feedback loop between “site invites bot” and “bot accepts invitation” as you can ask for on a brand-new domain with zero outside signal.

Zooming out from the post itself to the whole site, here’s the picture roughly 24 hours after we disabled Bot Fight Mode, pulled from Cloudflare’s AI Crawl Control dashboard:

- GPTBot (OpenAI) — 6 successful requests

- Googlebot (Google) — 6 successful requests

- BingBot (Microsoft) — 2 successful requests

- ClaudeBot (Anthropic) — 1 successful request

- PerplexityBot, Bytespider, Amazonbot, FacebookBot — 0 requests

One nuance: Cloudflare’s AI Crawl Control aggregates by operator, so “OpenAI” in the dashboard rolls up GPTBot plus its sibling crawlers. Splitting the same window with the GraphQL Analytics API: 5 of the 6 OpenAI hits were GPTBot; the 6th was OAI-SearchBot, OpenAI’s distinct answer-engine crawler. Two different OpenAI crawlers landing on the site within 24 hours is itself a finding — OpenAI is hitting us with one bot for training corpus collection and a separate bot for live answer retrieval.

Plus 6 unsuccessful (non-2xx) requests. Drilling into those turned out to be the most interesting data point of the day — they came almost entirely from BingBot probing for sitemap and feed conventions we don’t ship. Full breakdown in the next section.

Three things stand out before we even have a full week of data:

- GPTBot tied Googlebot for volume. OpenAI’s crawler hit us as aggressively as Google’s flagship bot in the first day. Whatever shape that competition takes a year from now, today it’s parity.

- The training-data crawlers haven’t shown up yet. Bytespider, Amazonbot, Common Crawl, Applebot-Extended, Google-Extended — all sitting at zero. The bots that did show up are the live-retrieval / answer-engine ones. That’s a real ordering, not noise: the bots that power today’s AI answers arrived first; the bots that feed tomorrow’s training runs are slower.

- ClaudeBot appeared but only once. Anecdotally consistent with Anthropic running a lighter crawl rhythm than OpenAI, but n=1 means anecdote is all it is so far. Worth watching whether ClaudeBot picks up frequency or stays sparse.

Methodology footnote. This snapshot comes from Cloudflare’s AI Crawl Control, which identifies bots via Cloudflare’s own fingerprinting (network signals, ASN, behavior, plus user-agent). Our middleware logs (the canonical record for this case study) match by user-agent string only. Both are valid; they’ll usually agree, and any time they disagree that itself is an interesting data point we’ll flag. Going forward we’ll publish numbers from both sources side by side.

Twenty-four hours is too short to draw conclusions about anything except “we exist to crawlers now.’ Treat this as week-zero noise. The point of writing it down today is to anchor against it later, when we have a month of data to argue about.

What BingBot does with a brand-new site

Of all the crawlers that touched us in the first 24 hours, BingBot was the most aggressive about discovery probing — and that turned out to be the most useful behavioral signal of the entire day. Filtering Cloudflare’s analytics down to BingBot’s 4xx requests in the window gives a clean picture of exactly what Microsoft’s crawler tries when it lands on an unknown domain:

- 2 hits on

/atom.xml— Atom feed for blog/article discovery - 2 hits on

/sitemap.xml.gz— gzipped sitemap (used by some CMSes for size) - 1 hit on

/sitemap.txt— plain-text sitemap (one URL per line) - 1 hit on

/sitemap_index.xml— sitemap-of-sitemaps (Yoast/WordPress convention) - 1 hit on

/sitemaps.xml— plural-form sitemap convention

Alongside these probes, BingBot also pulled our actual /sitemap.xml and a handful of HTML pages (4 successful 200 responses on the same day). So BingBot’s first-day playbook on a new site looks like: read the advertised sitemap, then probe every plausible alternate sitemap convention, then probe for an Atom feed. It’s looking for a content firehose, in any shape we’ll give it.

Three things this told us:

- BingBot wants an Atom feed. It asked twice in 24 hours and we didn’t ship one. We’re shipping

/atom.xmlin a follow-up commit because (a) it’s free, (b) Astro can generate it at build time with zero client-side JavaScript, and (c) Bing literally told us what it’s looking for. Worth doing for the LLM bots too — an Atom feed is a structured, summary-rich content shape that’s friendly to retrieval pipelines. - BingBot is much more aggressive about sitemap probing than the LLM crawlers. Neither GPTBot, ClaudeBot, OAI-SearchBot, nor any other LLM crawler probed alternate sitemap conventions in the first day. They went straight for our advertised

/sitemap.xmland HTML pages and stopped. That tracks with what each is optimizing for: classic search-engine crawling has to be exhaustive about discovery shape because sitemaps and feeds are its primary discovery channels. LLM crawlers can be more conservative because they have other discovery signals (queries from real users, citation graphs, model retrievals, training-data seeds). - 404 noise is signal. If you only watch your 2xx logs, you miss what crawlers wish you had. The 4xx paths are a free wishlist from search engines, especially for a brand-new domain that hasn’t taught the crawler anything about its content shape yet. We’re going to keep watching this column going forward.

This is exactly the kind of small finding the case study was set up to surface. We never would have noticed the Atom-feed gap from looking at a normal traffic dashboard; it only showed up because we were hunting for behavioral signal in the 4xx column.

Collateral fixes shipped alongside the bot work

Because we were already in the code, we shipped a few related fixes on the same day:

- Button contrast WCAG fix. A separate audit caught that our cyan button background (

#0077e6on#f5f5f7) measured 4.03:1, below the 4.5:1 WCAG AA minimum. We introduced a darker--btn-bg: #005bb3(6.1:1) for button surfaces while keeping the brand cyan for everything else. Documented in the ada changelog. - Web manifest fix. Added

purpose: "any maskable"to the PWA icons, closes a 1-point Lighthouse PWA audit miss. - Bottom-nav icon emboldening. Bumped stroke-width on the inline SVG icons in the mobile bottom nav from 2 to 2.5 to better match the visual weight of the filled icons next to them.

FAQ: how AI bots discover a new business

How is this different from “I checked our site in ChatGPT and it doesn’t know us yet”?

Anecdotal model checks are fine but they conflate two questions: “did the bot crawl us?” and “did the model retain us?” This post answers the first one with hard logs. The second question is downstream and harder.

Why publish numbers that might embarrass you?

Because the alternative, only publishing wins, is what every other agency does, and AI models are very good at ignoring puffery. A running log is harder to fake and more useful as a signal.

Will you publish the raw logs?

Aggregates yes, with bot/path/date/country breakdowns. Raw logs no, they contain visitor IPs and query strings, both of which deserve privacy. We’ll publish the schema and the queries we run.

Can I do this on my own site?

Yes. The middleware code (functions/_middleware.ts) is short enough to copy. The harder part is having an honest baseline: if you’ve been live for years, your “first-seen” dates are already in the past. This experiment is only clean from a clean slate.

What if no bots ever come?

Then the answer to the headline question is “longer than 30 days, possibly forever, without external signal,” and that’s the most actionable lesson you can give a founder. Discoverability isn’t automatic.